Recently, I got into a slap fight with PolitiFactBias.com (PFB). The self-proclaimed PolitiFact whistle blower bristled at my claim that my estimate of the partisan bias among two leading fact checkers is superior to theirs. A recurring theme in the debate surrounded PFB's finding that PolitiFact.com's "Pants on Fire" category, which PolitiFact reserves for egregious statements, occurs much more often for Republicans than for Democrats. Because the "Pants on Fire" category is the most subjective of the categories in PolitiFact's Truth-O-Meter, PFB believes the comparison is evidence of PolitiFact's liberal bias.

I agree with PFB that the "Pants on Fire" category is highly subjective. That's why, when I calculate my factuality scores, I treat the the category the same as I treat the "False" category. Yet treating the two categories the same doesn't account for selection bias. Perhaps PolitiFact is more likely to choose ridiculous statements that Republicans make so that they can rate them as "Pants on Fire", rather than because Republicans tend to make ridiculous statements more often than Democrats.

One way to adjust for selection bias on ridiculous statements is to pretend that "Pants on Fire" rulings ever happened. Presumably, the rest of the Truth-O-Meter categories are less susceptible to partisan bias in the selection and rating of statements. Therefore, the malarkey scores calculated from a report card excluding "Pants on Fire" statements might be a cleaner estimate of the factuality of an individual or group.

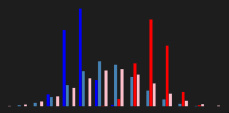

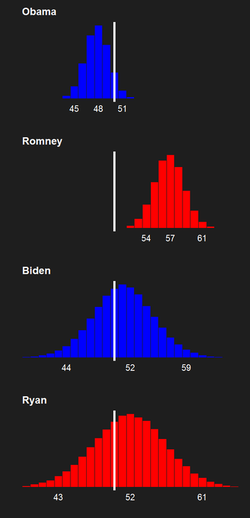

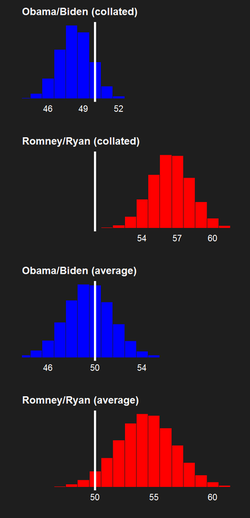

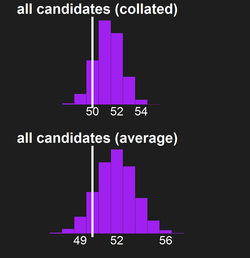

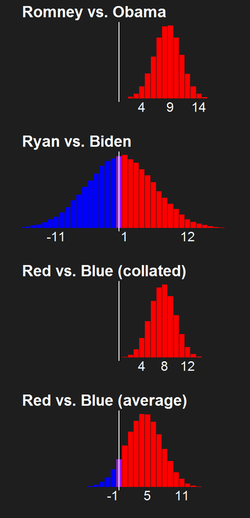

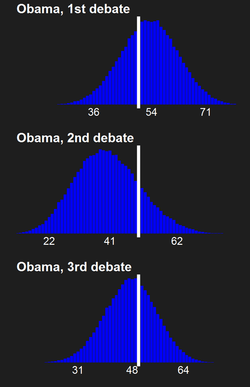

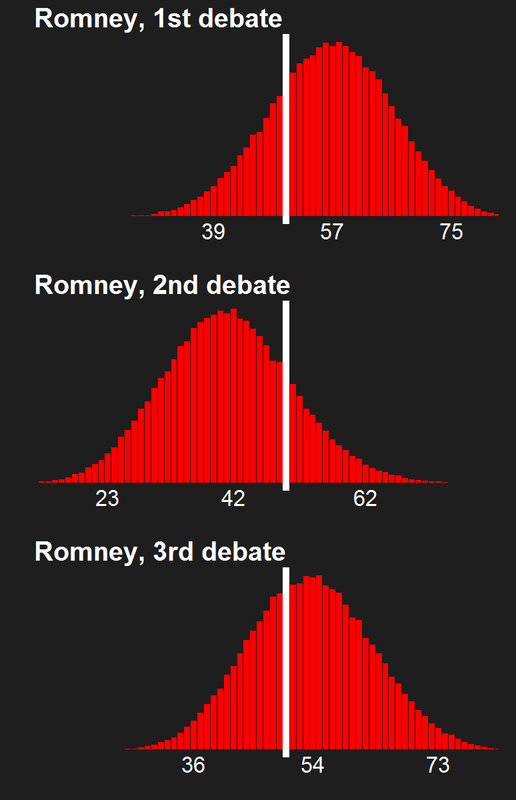

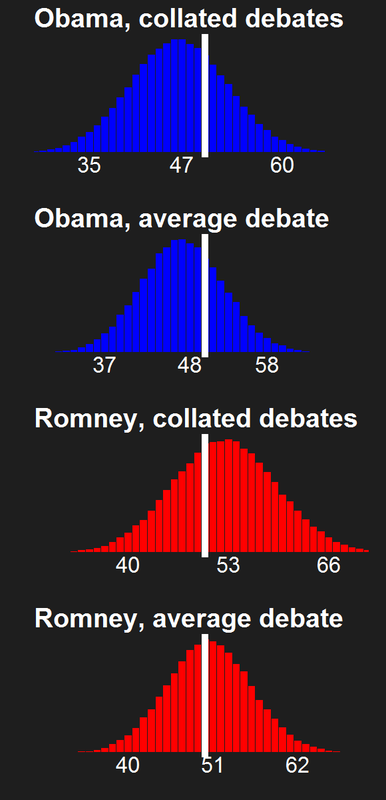

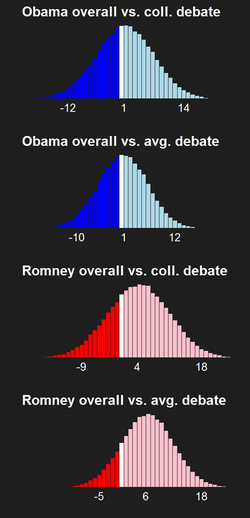

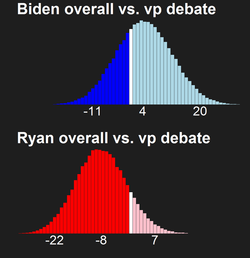

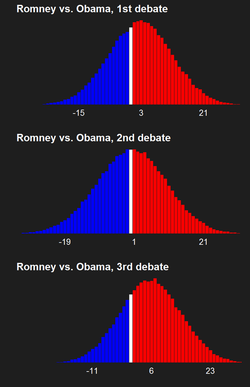

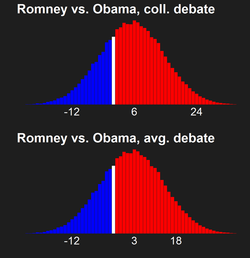

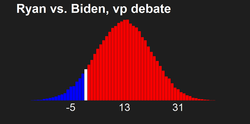

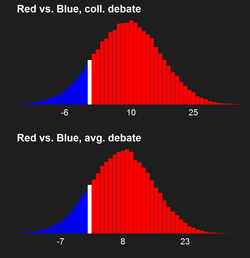

To examine the effect of excluding the "Pants on Fire" category on the comparison of malarkey scores between Republican and Democrats, I used Malark-O-Meter's simulation methods to statistically compare the collated malarkey scores of Rymney and Obiden after excluding the "Pants on Fire" statements from the observed Politi-Fact report cards. The collated malarkey score adds up the statements in each category across all the individuals in a certain group (such as a campaign ticket), and then calculates a malarkey score from the collated ticket. I examine the range of values of the modified comparison in which we have 95% statistical confidence. I chose the collated malarkey score comparison because it is one of the comparisons that my original analysis was most certain about, and because the collated malarkey score is a summary measure of the falsehood in statements made collectively by a campaign ticket.

My original analysis suggested that Rymney spews 1.17 times more malarkey than Obiden (either that or fact checkers have 17% liberal bias). Because we have a small sample of fact checked statements, however, we can only be 95% confident that the true comparison (or the true partisan bias) leads to the conclusion that Rymney spewed between 1.08 and 1.27 times more malarkey than Obiden. We can, however, be 99.99% certain that Rymney spewed more malarkey than Obiden, regardless of how much more.

After excluding the "Pants on Fire" category, you know what happens to the estimated difference between the two tickets and our degree of certainty in that difference? Not much. The mean comparison drops to Rymney spewing 1.14 times more malarkey than Obiden (a difference of 0.03 times, whatever that means!). The 95% confidence intervals shift a smidge left to show Rymney spewing between 1.05 and 1.24 times more malarkey than Obiden (notice that the width of the confidence intervals does not change). The probability that Rymney spewed more malarkey than Obiden plunges (sarcasm fully intended) to 99.87%. By the way, those decimals are probably meaningless for our purposes. Basically, we can be almost completely certain that Rymney's malarkey score is higher than Obiden's.

Why doesn't the comparison change that much after excluding the "Pants on Fire" rulings? There are two interacting, proximate reasons. First, the malarkey score is actually an average of malarkey scores calculated separately from the rulings of PolitiFact and The Fact Checker at The Washington Post. When I remove the "Pants on Fire" rulings from Truth-O-Meter report cards, it does nothing to The Fact Checker report cards or their associated malarkey scores.

Second, the number of "Pants on Fire" rulings is small compared to the number of other rulings. In fact, it is only 3% of the total sample of rulings across all four candidates, 2% of the Obiden collated report card, and 8% of the Rymney collated report card. So although Rymney has 4 times more "Pants on Fire" rulings than Obiden, it doesn't affect their malarkey scores from the Truth-O-Meter report cards much.

When you average one malarkey score that doesn't change all that much and another that doesn't change at all, the obvious result is that not much change happens.

What does this mean for the argument that including "Pants on Fire" rulings muddies the waters, even if I treat them the same as "False" rulings? It means that the differences I measure aren't affected heavily by the "Pants on Fire" bias, if it exists. So I'm just going to keep including them. This finding also lends credence to my argument that, if you want to call foul on PolitiFact and other top fact checkers, you need to cry foul on the whole shebang, not just one type of subjective ruling.

If you want to cry foul on all of PolitiFact's rulings, you need to estimate the potential bias in all of their rulings. That's what I did a few days ago, but what PFB hasn't done. I suggested a better way for them to fulfill their mission of exposing PolitiFact as liberally biased (which they've tried to downplay as their mission, but it clearly is). Strangely, they don't want to take my advice. It's just as well, because my estimate of PolitiFact's bias (and their estimate) can just as easily be interpreted as an estimate of true party differences.